Recently a customer with a 4-disk RAID5 array backed by a MegaRAID controller came to us because their RAID volume was missing. The virtual disk (VD) exported by the RAID controller had disappeared!

The logs indicated that it was deleted, but as far as we can tell no one was on the system at the time. Maybe this was a hardware/firmware error, or user error, but either way the information that defines the RAID volume was no longer available.

We were able to use the Linux RAID 5 module to recover the missing data! Read on:

Hardware RAID Volumes

When a volume is deleted, the RAID controller removes the volume descriptor on the physical disks but it does not destroy the data itself; the data is still there. There are ways to recover the data using the controller itself. MegaRAID volume recovery documentation suggests that you to re-create the volume using the same parameters and disk order as the original RAID. For example, if you know that it was a 256k stripe then you can recreate the array with the same disk ordering and same stripe size. However, for a raid volume that has been in service for years, how can this possibly be known unless someone wrote it down?

While the controller would then stamp the disks with a new header and leave the data alone, theoretically there will be no data loss and the array will continue as it had originally. This procedure comes with quite a bit of risk. if the parameters are off then you can introduce data corruption. Thus, you should back up each disk individually as a raw full disk image. Unfortunately this takes a long time and as far from convenient. If you get the parameters wrong then the data should be restored before trying again to guarantee consistency, and that takes even longer.

Guarantee Recovery Without Data Loss

Recovery in place was the only option because it would take too long to do full backups during each iteration. However, we also had to guarantee that the recovery process would not introduce failures that could lead to data corruption. For example, if we had chosen a 64k stripe size but it was formatted with a 256k stripe size, then the data would be incorrect. While it is probably safe to try multiple stripe sizes without initialization by the raid controller, there is the risk of technician error causing data loss during this process.

You should always avoid single-shot processes with the risk of corrupting data. In this case terabytes of scientific data were at riskand I certainly did not trust the opaque behavior of a hardware raid controller card that might try to initialize an array with invalid data when I did not want it to. Using a procedure provided by the RAID controller making several attempts, each with an additional risk of losing data, is unnerving to say the least!

I would much prefer a method that is more likely to succeed, and certainly with the guarantee that it is impossible to lose data. When working with customer data it is imperative that every test we make along the way during the process of data recovery is guaranteed not to make things worse.

How to use Linux for RAID Recovery

This is where Linux comes in: using the Linux loopback driver we can require read-only access to the disks. By using the Linux dm-raid5 module we can attempt to reconstruct the array by guessing it’s parameters. This allows us a limitless number of tries and the guarantee that the process of trying recover the data never cause corruption, whether or not it succeeds.

First we started by exporting the disks on the RAID controller as jbod volumes. This allows Linux to see the raw volumes as they were without being modified by the RAID controller firmware:

storcli64 /c0 set jbod=on

Once the drives are available to the operating system as raw disks, we used the Linux loopback driver to configure them as read only volumes. For example:

losetup --read-only /dev/loop0 /dev/sdX

losetup --read-only /dev/loop1 /dev/sdY

losetup --read-only /dev/loop2 /dev/sdZ

losetup --read-only /dev/loop3 /dev/sdW

Now that the volumes are read only we can attempt to construct them using the Linux RAID 5 device mapper target. There are several major problems:

- We do not know the stripe size

- We do not know the on-disk format used by the RAID controller.

- Worse than that, we do not know the disk ordering that the RAID controller selected when it built the array.

This is makes for quite a few unknown variables–and only one combination will be correct. In our case there were only 4 disks so the possible disk ordering is 24 (4! = 4*3*2*1 = 24).

Now it is a matter of trial and error and we can use the computer to help us a bit to minimize the amount of typing we have to do, but there is still manual inspection to review and make sure that the ordering it found is useful and correct:

[0,1,2,3], [0,1,3,2], [0,2,1,3], [0,2,3,1], [0,3,1,2], [0,3,2,1],

[1,0,2,3], [1,0,3,2], [1,2,0,3], [1,2,3,0], [1,3,0,2], [1,3,2,0],

[2,0,1,3], [2,0,3,1], [2,1,0,3], [2,1,3,0], [2,3,0,1], [2,3,1,0],

[3,0,1,2], [3,0,2,1], [3,1,0,2], [3,1,2,0], [3,2,0,1], [3,2,1,0]

We permuted all possible disk orderings and passed them through to the `dm-raid` module to create the device mapper target:

dmsetup create foo --table '0 23438817282 raid raid5_la 1 128 4 - 7:0 - 7:1 - 7:2 - 7:3'

For each possible permutation, we used `gfdisk` to determine if the partition table was valid and found that disk-3 and disk-1 presented a valid partition table if they were the first drive in the list; thus, we were able to rule out half of the permutations in a short amount of time:

gdisk -l /dev/foo

...

Number Start (sector) End (sector) Size Code Name

1 2048 23438817279 10.9 TiB 0700 primary

we did not know the exact sector count of the original volume, so we had to estimate based on the ending sector reported by gdisk. We knew that there were 3 data disks so the sector count had to be a multiple of three.

The next step was to use the file system checker (e2fsck / fsck.ext4) to determine which permutation has the least number of errors. Many of the permutations that we tested fail the minute immediately the file system checker did not recognize the data at all. However, in a few of the permutations the file system checker understood the file system enough to spew 1000s of lines of errors on the screen. We knew we were getting closer, but none of the file system checks seemed to complete with a reasonable number of errors. This caused us to speculate that our initial guess of a 64k stripe size was incorrect. The next stripe size we tried was 256k and we began to see better results. Again many of the file system checks failed altogether but the file system checker seems to be doing better on some of the permutations. however, it still was not quite right. We had only been trying the default raid5_la module format, but the dm-raid module has the following possible formats:

- raid5_la RAID5 left asymmetric – rotating parity 0 with data continuation

- raid5_ra RAID5 right asymmetric – rotating parity N with data continuation

- raid5_ls RAID5 left symmetric – rotating parity 0 with data restart

- raid5_rs RAID5 right symmetric – rotating parity N with data restartwe added a 2nd loop to test every raid format for every permutation and when it reached raid5_ls on the 23rd permutation, the file system checker became silent and took a very long time. Only rarely did it spit out a benign warning about some structure problem that it found which was probably already in the valid RAID array to begin with. We had found the correct configuration to recover this raid volume!While we had to figure this out initially by trial and error using a simple Perl script to discover the configuration, you know that the MegaRAID controller uses the raid5_ls RAID type. This was the correct configuration for our drive:

- RAID disk ordering: 3,2,0,1

- RAID Stripe size: 256k

- RAID on-disk format: raid5_ls – left symmetric: rotating parity 0 with data restart

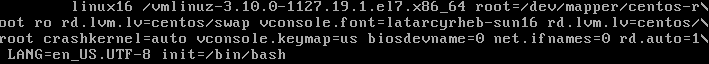

Now that the raid volume was constructed we wanted to test to make sure it would mount and see if we can access the original data. Modern file systems have journals that will replay at mt time and we needed to keep this read only because a journal replay of invalid data could cause corruption. Thus, we used the “noload” option while mounting to prevent replay:

mount -o ro,noload /dev/foo /mnt/tmp

The volume was read only because we used a read-only loopback device, so it was safe, but when trying to mount without the noload option it would refuse to mount because the journey journal replay failed.

Automating the Process

whenever there is a lot of work to do, we always do our best to automate the process to save time and minimize user error. Below you can see the Perl script that we used to inspect the disk.

The script does not have any specific intelligence, it just spits out the result of each test for human inspection; of course it needs to be tuned to the specific environment. All tests are done in a read-only mode and loopback devices were configured before running the script.

When the file system checker for a particular permutation would display 1000s of lines of errors and we would have to kill that process from another window so it would proceed and try the next permutation. In these cases there was so much text displayed on the screen that we would pipe the output through less or directed into a file to inspect it after the run.

This script is for informational use only, use it at your own risk! If you are in need of RAID volume disk recovery on a Linux system than we may be able to help with that. Let us know if we can be of service!

#!/bin/perl

# This program is free software; you can redistribute it and/or modify

# it under the terms of the GNU General Public License as published by

# the Free Software Foundation; either version 2 of the License, or

# (at your option) any later version.

#

# This program is distributed in the hope that it will be useful,

# but WITHOUT ANY WARRANTY; without even the implied warranty of

# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

# GNU Library General Public License for more details.

#

# You should have received a copy of the GNU General Public License

# along with this program; if not, write to the Free Software

# Foundation, Inc., 59 Temple Place - Suite 330, Boston, MA 02111-1307, USA.

#

# Copyright (c) 2023 Linux Global, all rights reserved.

use strict;

my @p = (

[0,1,2,3], [0,1,3,2], [0,2,1,3], [0,2,3,1], [0,3,1,2], [0,3,2,1],

[1,0,2,3], [1,0,3,2], [1,2,0,3], [1,2,3,0], [1,3,0,2], [1,3,2,0],

[2,0,1,3], [2,0,3,1], [2,1,0,3], [2,1,3,0], [2,3,0,1], [2,3,1,0],

[3,0,1,2], [3,0,2,1], [3,1,0,2], [3,1,2,0], [3,2,0,1], [3,2,1,0]);

my $stripe = 256*1024/512;

my $n = 0;

foreach my $p (@p)

{

next unless $p->[0] =~ /3|1/;

for my $fmt (qw/raid5_la raid5_ra raid5_ls raid5_rs raid5_zr raid5_nr raid5_nc/)

{

activate($p, $fmt);

}

$n++

}

sub activate

{

my ($p, $fmt) = @_;

system("losetup -d /dev/loop7; dmsetup remove foo");

print "\n\n========= $n $fmt: @$p\n";

my $dmsetup = "dmsetup create foo --table '0 23438817282 raid $fmt 1 $stripe "

. "4 - 7:$p->[0] - 7:$p->[1] - 7:$p->[2] - 7:$p->[3]'";

print "$dmsetup\n";

system($dmsetup);

system("gdisk -l /dev/mapper/foo |grep -A1 ^Num");

system("losetup -r -o 1048576 /dev/loop7 /dev/mapper/foo");

system("file -s /dev/loop7");

system("e2fsck -fn /dev/loop7 2>&1");

}